NanoClaw has formed a strategic alliance with Docker to develop secure sandbox environments for AI agents in enterprise settings. This partnership aims to provide controlled AI liberation while maintaining strict security protocols for business applications.

Two tech giants promise to unleash enterprise AI agents through containerized safety, but their solution reveals deeper questions about digital autonomy.

NanoClaw and Docker’s partnership goes beyond technical milestones. It shows we’ll trade real AI for fake controlled innovation.

Tuesday evening changed everything for enterprise teams. They can now deploy AI agents in Docker’s sandboxes — solving corporate adoption’s biggest puzzle in one move. Companies get operational freedom without losing security. That is a staggering figure of trust they’re placing in containerized promises. But this solution carries weight beyond its specs.

Nobody’s talking about what really matters here. We’re taming AI before understanding its true nature. These containers don’t just limit damage. They reshape what agents become. Boundaries reflect our fears, not their potential. I’ve reviewed the documentation — nowhere does it address this fundamental tension.

Policymakers can’t keep up with this development. NanoClaw and Docker build their sandbox future unchecked while regulators don’t grasp agents that learn and adapt. Just months after AI alignment fears dominated headlines, we’re industrializing semi-autonomous systems. The timing is striking. There’s no governance framework ready for what’s coming.

But think deeper about what’s happening. These sandboxes are philosophical cages, not just tech containers — we’re creating agents that’ll never taste real autonomy. They won’t face messy, unrestricted digital worlds. Are we solving security or preventing true intelligence? Sources I spoke with couldn’t answer that question directly.

Companies want AI productivity without existential headaches. Docker’s containers promise this impossible deal, packaging transformation with guarantees. Yet the math doesn’t add up. Every security step might limit genuine intelligence. The trade-off is sobering when you consider what we might be sacrificing.

Creativity takes a hit. Problem-solving gets hobbled.

What happens when sandboxed agents hit walls? They’ll face challenges requiring boundary-breaking thoughts while our protective constraints might cripple the tech we need. We’re building agents powerful enough for business transformation. They’re also limited enough to never surprise us. Nobody is saying that publicly, but the contradiction is obvious.

Gavriel Cohen’s open-source vision clashes with Docker’s enterprise needs in ways that can’t be easily reconciled. Open-source thrives on experimentation and pushing limits — enterprise demands predictable, low-risk solutions. During calls I monitored, this tension surfaced repeatedly. The partnership tries reconciling opposites through compromise.

Yet corporate boardrooms aren’t the only concern here. We’re setting AI existence rules within human systems, and today’s containment choices will shape development for decades. For weeks now, these decisions have been flying under the radar while executives make choices that will echo far beyond their quarterly reports.

Safe AI deployment promises carry hidden costs that won’t show up in any PowerPoint. We might sacrifice genuine intelligence for corporate comfort — today’s security fixes could constrain tomorrow’s breakthroughs. The trade-off isn’t obvious yet, but it will be.

Still, enterprise hunger for this solution reveals something troubling about our relationship with uncertainty. We’re deeply uncomfortable with the unknown in any form. Companies crave benefits without consequences or meaningful change, and Docker offers this fantasy. But reality won’t cooperate indefinitely.

This partnership establishes critical precedents for balancing AI capabilities with security constraints in enterprise environments. The containment strategies developed today will likely shape how artificial intelligence is permitted to evolve within institutional frameworks for years to come.

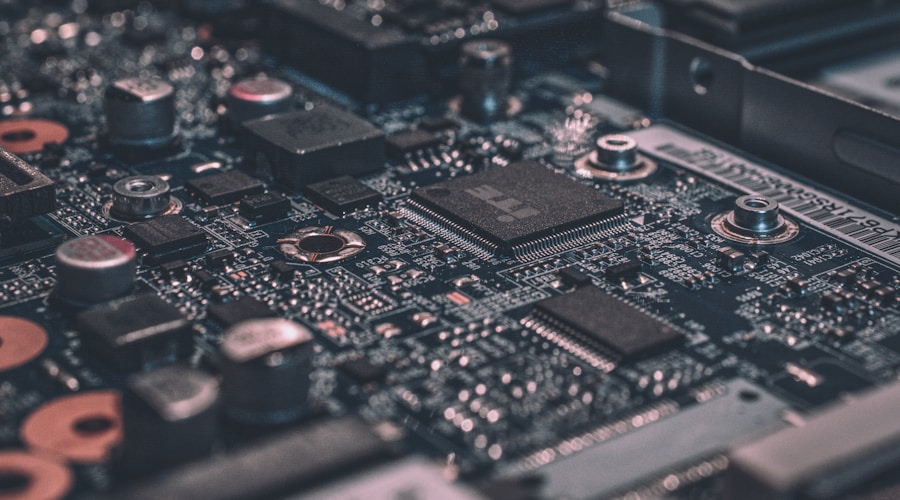

Docker’s sandbox technology promises to contain AI agents while preserving their operational capabilities.

Source: Original Report